Read time: under 9 minutes

Welcome to this week's edition of The Legal Wire!

This week’s signal was clear: as AI capabilities climb, the pressure shifts to governance. In the U.S., a federal judge’s Heppner ruling put “ChatGPT for legal strategy” on notice, warning that materials generated via third-party AI tools may not be protected by attorney-client privilege if the tool may collect, retain, or disclose what you feed it.

At the same time, “Claude Mythos” set off a high-level cyber alarm across global finance, with officials urging banks to treat vulnerability-finding models as both a defensive asset and a systemic risk. And with Washington keeping a lighter regulatory touch, unions are moving to fill the guardrail gap directly in contracts through notice requirements, bargaining rights, and work-preservation clauses, while the FTC signals it will police scams and “AI-washing,” not act as the default AI regulator.

Our feature this week follows the same arc from capability to control: Flank, and the shift from legal workflows to legal execution. Agents that do the work first, then route it to lawyers for supervised review.

This week’s Highlights:

Industry News and Updates

Flank and the shift from legal workflows to legal execution

AI Regulation Updates

AI Tools to Supercharge your productivity

Legal prompt of the week

Latest AI Incidents & Legal Tech Map

Headlines from The Legal Industry You Shouldn't Miss

➡️ Unions Move to Fill the AI Guardrail Gap | Labor groups say Trump’s new AI framework leaves too many workplace risks unaddressed, pushing unions to negotiate protections regarding job security, advance notice of new tech, and guardrails on how AI is deployed directly into contracts. As federal rules remain limited, recent wins by unions in casinos, newsrooms, and entertainment show a pattern: require notice and bargaining over AI’s impact, protect name/image/likeness from AI replicas, and lock in training or work-preservation clauses to prevent tech-driven layoffs.

Apr 20, 2026, Source: Bloomberg

➡️ Claude Mythos Triggers High-Level Cyber Alarm for Global Finance | Finance ministers, central bankers, and bank CEOs warn that Anthropic’s unreleased “Claude Mythos” model could materially raise cyber risk for the financial system, after it reportedly identified vulnerabilities in major operating systems. Governments and banks have access ahead of any public release so they can test and patch systems, with the model currently limited to a “Project Glasswing” group of large tech and security firms. Independent testing by the AI Security Institute found Mythos can exploit weakly defended environments.

Apr 17, 2026, Source: BBC

➡️ Musk v OpenAI Heads to Two-Phase Trial Starting April 27 | Musk, OpenAI, Microsoft and the other defendants have agreed to a two-phase trial structure starting April 27, splitting the case into a liability phase followed by a remedies phase. Under the proposed setup, the same jury would hear liability first, then stay on in an advisory capacity on remedies, ultimately giving a non-binding recommendation to U.S. District Judge Yvonne Gonzalez Rogers on what relief (if any) should be ordered.

Apr 16, 2026, Source: MLex

➡️ Heppner ruling puts “ChatGPT for legal strategy” on notice | A federal judge in New York ruled in United States v. Heppner that dozens of documents a defendant generated through a generative AI tool that may collect, retain, and potentially disclose (then forwarded to his lawyers) were not protected by attorney-client privilege. The court also underscored that sending non-privileged materials to counsel doesn’t make them privileged after the fact, and work-product protection is hard to claim when the materials weren’t prepared by or at the direction of counsel.

Apr 15, 2026, Source: The National Law Review

➡️ FTC’s AI Stance: Enforce the Scams, Not the Whole Category | FTC Chair Andrew Ferguson told the Senate Commerce Committee the agency will use its existing powers to pursue AI-enabled fraud and deceptive claims (including “AI-washing”), but he doesn’t want the FTC acting as a broad, default AI regulator. He framed the approach as targeted, case-by-case enforcement, especially around consumer harm and children’s privacy, while urging Congress to set comprehensive AI and privacy rules that the FTC can then enforce.

Apr 15, 2026, Source: Law360

Will this be the Next Big Thing in A.I?

Legal Technology

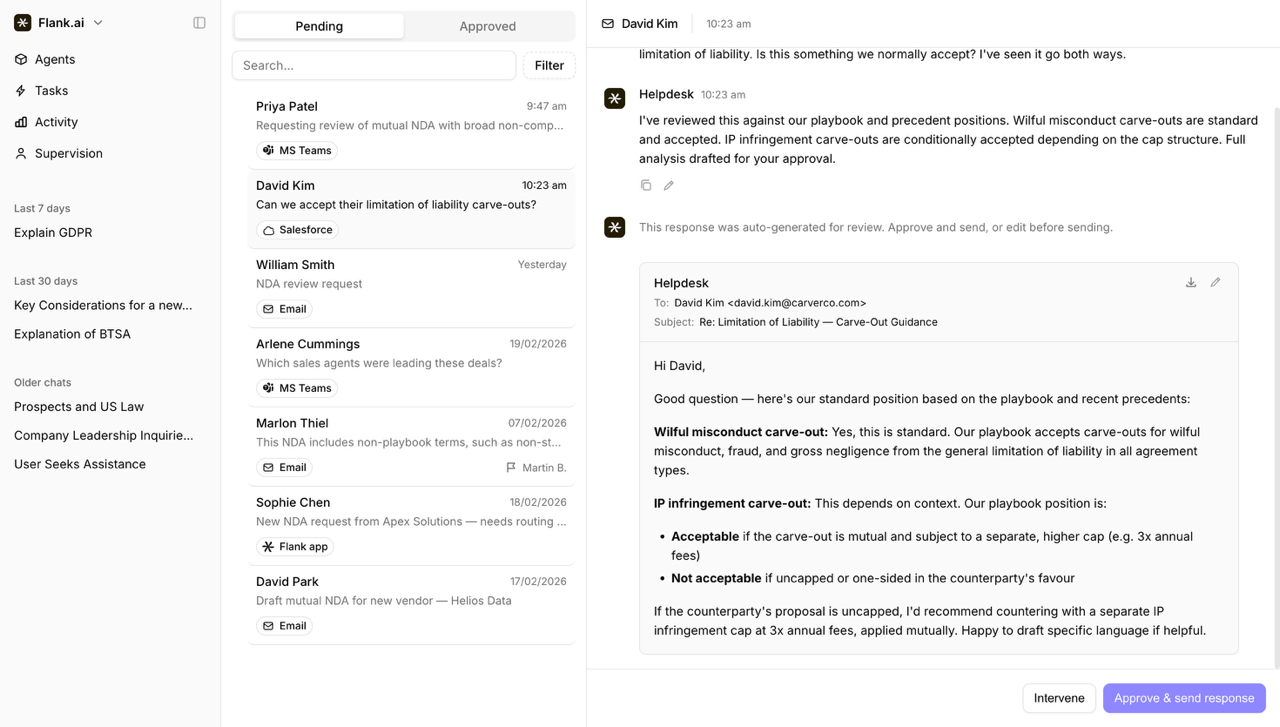

Flank and the shift from legal workflows to legal execution

Legal teams have spent the better part of a decade refining how work moves through their department. Intake tools, contract lifecycle management platforms, and more recently AI copilots have all attempted to make that process more efficient.

But the structure itself has largely remained intact. Work arrives. A lawyer picks it up. Technology assists along the way.

Flank takes aim at that structure directly.

The company’s proposition is that enterprise legal teams can insource routine work to AI agents rather than sending it to outside counsel or contract lawyers. It is built on a specific assumption: that a meaningful portion of legal work does not need to be assisted, it can be executed. That distinction changes where the primary bottleneck arises, and by extension, what the lawyer’s role looks like.

From assistance to execution

Much of the current generation of legal AI sits alongside the lawyer. It drafts faster, searches more broadly, and surfaces risks more efficiently. But the lawyer remains the operator.

Flank shifts that role.

Its agents sit inside the tools the business uses from day to day, most notably email, and pick up inbound legal requests directly. The process is essentially this: a commercial team sends an NDA or a question to a shared inbox. The agent reads it, determines what is required, and executes the task, be it drafting, reviewing, redlining, or responding. Only once the work is complete does it reach legal, where it is reviewed through a supervision layer before being sent back.

The AI Regulation Tracker offers a clickable global map that gives you instant snapshots of how each country is handling AI laws.

The most recent developments from the past week:

📋 17 April 2026 | UK technology secretary urges UK public to embrace AI as government makes first £500m fund investment: The UK government has made the first investment from its £500m sovereign AI fund, with an undisclosed stake in London-based chip-efficiency company Callosum. Technology Secretary Liz Kendall urged the public to “make AI work for Britain” despite disruption and security concerns. Alongside the investment, the government plans to give six UK firms access to publicly funded supercomputers to help them develop AI models, framing the push as backing “national champions” to keep high-growth AI businesses competitive and anchored in the UK.

📋 16 April 2026 | National AI Strategy Committee launches security task force to address Claude Mythos: South Korea’s National AI Strategy Committee has formed a new Security Special Committee to respond to rising cyber risks linked to advanced generative models like “Claude Mythos,” with an initial focus on tightening expectations for installable security software in the financial sector. At its first meeting, officials warned that more capable AI could surface previously hidden vulnerabilities, pushed for security tools that are less disruptive, and backed longer-term plans such as AI-driven real-time defenses and deeper international security coordination.

📋 14 April 2026 | Insurers seek exclusion of risk-assessment models from EU AI Act: EIOPA has asked EU lawmakers to clarify how the AI Act applies to insurance, arguing that standard, transparent pricing models like GLMs and GAMs (e.g., linear/logistic regression) should be explicitly excluded from the Act’s “high-risk” category when used under human supervision. It warns that treating these long-established tools as high-risk would add compliance burden and drain supervisory resources without clear consumer benefit, and proposes Digital Omnibus tweaks plus a stronger role for EU supervisors to avoid overlap with Solvency II and DORA.

What Will Your Retirement Look Like?

Retirement looks different for everyone. What it costs, where the income comes from, how long it needs to last. Those answers are specific to you.

The Definitive Guide to Retirement Income helps investors with $1,000,000 or more work through the questions that matter and build a plan around the answers.

Download your free guide to start turning a savings number into an actual retirement income strategy.

AI Tools that will supercharge your productivity

🆕 Volody - Secure, smart, and simple AI contract management software.

🆕 Onit - The AI-native platform for managing legal matters, controlling legal spend, and running legal operations at scale.

🆕 Streamline AI - Streamline AI automates intake, routing, and approvals workflows so your team can focus on strategy, not data entry.

Want more Legal AI Tools? Check out our

Top AI Tools for Legal Professionals

The weekly ChatGPT prompt that will boost your productivity

Why it helps: Delivers a structured, citation-backed snapshot of the law in minutes.

Prompt:

Research [TOPIC] in [JURISDICTION(S)] for [AUDIENCE: partner/client/internal memo].

Return a concise research pack that includes:

1.The governing legal rule(s) and any relevant tests/standards.

2. The leading authorities: cases, statutes, regulations, and (if relevant) agency guidance.

3. For each key case: court, year, holding, and why it matters (1–2 sentences).

4. Any splits, trends, or unsettled issues, including minority rules.

5. Practical application: how the law applies to a typical fact pattern in this area and common pitfalls.

6. Next-step research leads: keywords, treatises/practice guides, and questions to confirm with primary sources.

Provide full citations for every authority.

Include links or source identifiers where available (e.g., official code/agency page, Westlaw/Lexis citation if used).

Do not fabricate citations—if uncertain, say “unable to verify” and suggest where to confirm.

Keep the output organized with clear headings and a short conclusion.

Collecting Data to make Artificial Intelligence Safer

The Responsible AI Collaborative is a not‑for‑profit organization working to present real‑world AI harms through its Artificial Intelligence Incident Database.

View the latest reported incidents below:

⚠️ 2026-03-02 | DOJ Attorney Reportedly Used AI to File Brief With Purportedly Fabricated Quotes and Misstated Case Holdings | View Incident

⚠️ 2025-07-01 | Delaware Court Found Krafton Followed Most of ChatGPT's Recommendations in Campaign that Wrongfully Terminated Unknown Worlds Executives and Seized Operational Control | View Incident

⚠️ 2024-12-01 | Ohio Man Pleaded Guilty after Prosecutors Alleged He Used AI to Create and Distribute Nonconsensual Intimate-Image Forgeries Including CSAM in Harassment Campaign | View Incident

The Legal Wire is an official media partner of:

Thank you so much for reading The Legal Wire newsletter!

If this email gets into your “Promotions” or "Spam” folder, move it to the primary folder so you do not miss out on the next Legal Wire :)

Did we miss something or do you have tips?

If you have any tips for us, just reply to this e-mail! We’d love any feedback or responses from our readers 😄

Disclaimer

The Legal Wire takes all necessary precautions to ensure that the materials, information, and documents on its website, including but not limited to articles, newsletters, reports, and blogs ("Materials"), are accurate and complete.

Nevertheless, these Materials are intended solely for general informational purposes and do not constitute legal advice. They may not necessarily reflect the current laws or regulations.

The Materials should not be interpreted as legal advice on any specific matter. Furthermore, the content and interpretation of the Materials and the laws discussed within are subject to change.